Open Sourcing Agentspan: Durable AI Agents

Everyone is building agents right now.

Engineers automating CI pipelines. Data teams pulling from a dozen sources and generating reports autonomously. Support teams triaging tickets. PMs synthesizing weekly status reports that used to take half a day. Pick a framework, write some Python, and you have something that feels like magic within an hour.

But then you try to actually rely on it.

An agent hits a rate limit at step 7. An unhandled exception crashes the process mid-pipeline. A support bot goes down during peak hours and nobody notices until the queue is on fire. Process dies, agent dies, progress lost — every API call, every token spent, gone.

The problem isn’t the agents. They work beautifully in a demo. The problem is there is nowhere to run them that survives the real world.

Today, we are open-sourcing Agentspan to fix that.

What is Agentspan

Agentspan is a durable runtime for AI agents and an open-source SDK to build, run, and observe agents. It is MIT licensed.

The SDK gives you a clean, powerful way to define agents:

from agentspan.agents import Agent, run, tool

@tool

def search_web(query: str) -> str:

"""Search the web for information."""

return brave_search(query)

researcher = Agent(

name="researcher",

model="openai/gpt-4o",

instructions="Research the topic thoroughly.",

tools=[search_web],

)

result = run(researcher, "State of AI agents in 2026")

print(result.output["result"])

One class — Agent. One decorator — @tool. One function — run(). That is the entire surface area for building an agent.

The runtime makes that agent indestructible. When you call run(), Agentspan compiles your agent definition into a durable workflow powered by battle-tested Conductor — open-source orchestration used by Netflix, Tesla, and LinkedIn that has already powered billions of executions in production. The server owns the execution state, not your process. Kill the terminal, crash the worker process, restart the machine. The agent keeps running. Reconnect from any process, any machine, and pick up from the exact step.

If checkpointing is auto-saving a Word document every 5 minutes, Agentspan is Google Docs — the state lives on the server, there is nothing to save or restore.

pip install agentspan

agentspan server start

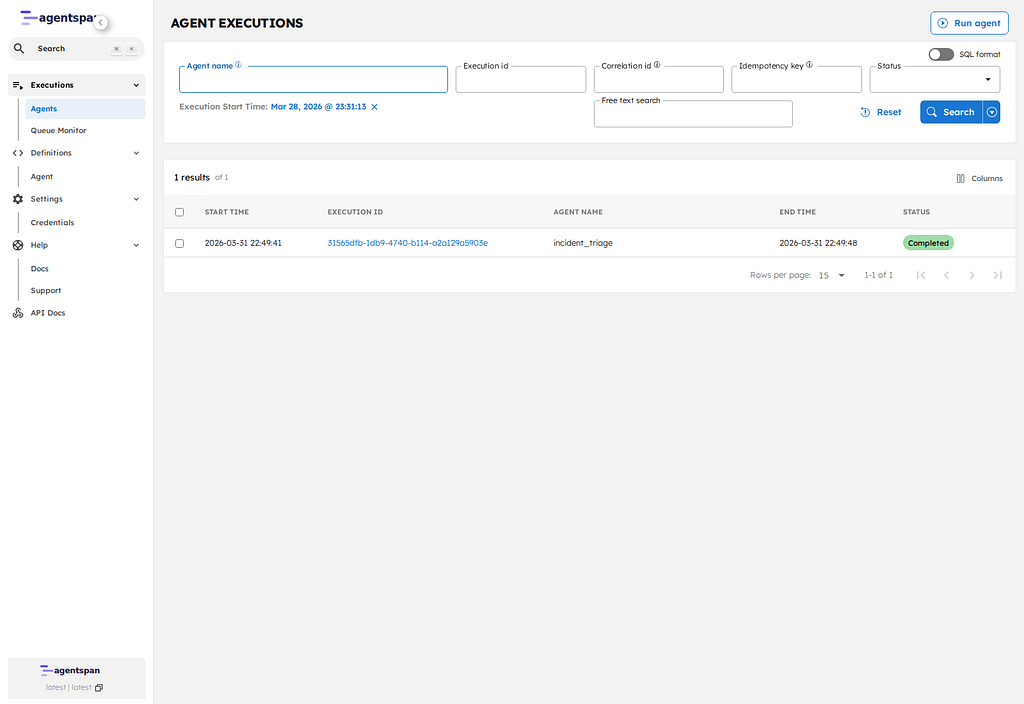

Two commands give you a local server with a visual dashboard at localhost

. No account needed and no API key for Agentspan. Just point at your LLM and go.What ships today

Durable execution — zero configuration. Step 7 of 10 completes, the process crashes, execution resumes at step 8. No checkpointer to set up. No database to configure. No save/restore code to write. Durability is the default, not an opt-in feature.

Human-in-the-loop that actually holds. One decorator:

from agentspan.agents import Agent, AgentRuntime, EventType, tool

@tool(approval_required=True)

def execute_trade(symbol: str, amount: float) -> str:

"""Execute a trade. Requires human approval."""

return broker.execute(symbol, amount)

trader = Agent(

name="trader",

model="openai/gpt-4o",

tools=[execute_trade],

instructions="You are a trading assistant.",

)

with AgentRuntime() as runtime:

handle = runtime.start(trader, "Buy 100 shares of AAPL")

for event in handle.stream():

if event.type == EventType.WAITING:

# Agent is paused server-side. Approve now, or tomorrow.

handle.approve() # or handle.reject("Too risky")

elif event.type == EventType.DONE:

print(event.output)

The agent pauses and holds its state on the server, indefinitely. No blocked threads, no timeouts. A human can approve from Slack, a web dashboard, the CLI, or through any other method whenever they are ready — hours or days later. The agent resumes exactly where it left off.

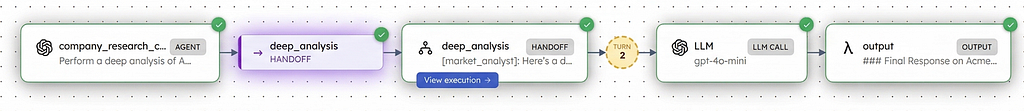

8 multi-agent strategies. Sequential pipelines, parallel fan-out, LLM-driven handoffs, router agents, swarm orchestration, round robin, random, and manual human selection. All through one primitive:

from agentspan.agents import Agent, Strategy, run

# Chain agents with >>

result = run(researcher >> writer >> editor, "AI agents in 2026")

# Run agents in parallel

team = Agent(

name="analysis_team",

agents=[market_analyst, risk_analyst, financial_analyst],

strategy=Strategy.PARALLEL,

)

The same Agent class scales from a weekend prototype to a production system with dozens of specialized agents.

Production guardrails. Regex patterns, LLM-as-judge, custom Python functions each with four failure modes: retry, raise an error, auto-fix, or escalate to a human. Because “the model sometimes says weird things” isn’t acceptable in production.

Server-side tools. Point at an OpenAPI spec or MCP server. Endpoints auto-discover and execute entirely server-side — no worker infrastructure needed. Your existing APIs become agent tools with a single line.

Full observability. Every LLM call, every tool invocation, every decision point, every token spent. Stored, queryable, and replayable. A visual dashboard purpose-built for agent debugging. OpenTelemetry and Prometheus out of the box.

Agent testing in CI. Deterministic tests that run in milliseconds with no LLM calls and no running server:

from agentspan.agents.testing import mock_run, MockEvent, expect

result = mock_run(

agent,

"Capital of France?",

events=[

MockEvent.tool_call("search", {"query": "capital of France"}),

MockEvent.tool_result("search", "Paris is the capital."),

MockEvent.done("The capital of France is Paris."),

],

)

expect(result).completed().output_contains("Paris").used_tool("search")

Supports multiple LLM Providers that can be used with agents. OpenAI, Anthropic, Google Gemini, Mistral, Groq, Ollama for local models, and more. One string to switch.

Works with what you have already built

Already invested in a framework? Keep your code.

Pass your OpenAI Agents SDK agents directly to Agentspan’s run(). Your handoffs, tools, and guardrails stay identical. Agentspan adds durable execution underneath.

Same for Google ADK. Your ADK pipeline stays intact. Agentspan wraps the execution layer — persistence and observability with no restructuring.

LangGraph your graph, nodes, and edges stay identical. Agentspan compiles them into durable workflows.

No rewrites. No new abstractions to learn. Your existing agents, made durable.

210+ runnable examples

Not documentation. Running code.

- 70+ core examples — from hello world to multi-agent pipelines with guardrails, streaming, human-in-the-loop, RAG, and code execution

- 40 Google ADK examples — your ADK agents, now durable

- 46 LangGraph examples — complex graph workflows, made crash-safe

- 13 OpenAI Agents SDK examples — familiar SDK, production-grade runtime

Every example runs out of the box against your local Agentspan server. Pick one, run it, break it, rebuild it.

Open source. MIT licensed. The whole thing.

CLI (Go). Server (Java/Spring Boot). Python SDK. TypeScript SDK. UI (React). All of it — open under MIT. No artificial limitations. No feature gates. No “contact sales for the good parts.”

Try it now

Python SDK path:

pip install agentspan

agentspan server start

Standalone CLI path:

npm install -g @agentspan/agentspan

agentspan server start

GitHub: github.com/agentspan-ai/agentspan

Discord: https://discord.com/invite/ajcA66JcKq

Docs: agentspan.ai/docs

Examples: agentspan.ai/examples

Your agents were built to last. Give them Agentspan.